“Thanks for your insightful, and scary presentation.”

That was a humorous comment from an Asian financial regulator after AnChain.AI presented at the Financial Action Task Force Virtual Asset Contact Group, hosted in Paris on May 11, 2026.

The session, titled “AI-Powered Crypto Fraud: Threats, Trends & Typologies,” brought together global financial regulators to examine how artificial intelligence is reshaping fraud, cybercrime, money laundering, and virtual asset risk.

AnChain.AI was invited to share this pressing topic with regulators across the U.S., APJ, EMEA, and the European Union. We were encouraged by the strong engagement and follow-up questions, because the core message is urgent: AI-driven financial crime is no longer theoretical. It is already attacking the global financial system.

What is FATF?

Financial Action Task Force — the global standard-setter for anti-money laundering, counter-terrorist financing, and proliferation financing.

What is VACG?

Virtual Assets Contact Group — FATF’s group focused on virtual assets, VASPs, crypto risks, and related policy implementation.

In AnChain.AI’s 2025 Digital Asset Risk Annual Report, we analyzed the top AI-driven crypto fraud incidents of the year. Across just 10 major AI-enabled cases, more than $2 billion was stolen. These incidents included exchange breaches, deepfake support scams, DeFi exploits, multisig key theft, automated price manipulation, and cross-chain laundering. More important than the individual losses was the pattern behind them: AI was not incidental. It was increasingly central to how attacks were planned, executed, scaled, and laundered.

The modern AI-enabled fraud stack is becoming clear:

LLMs generate the narrative. Deepfakes establish trust. Automated tools exploit logic. Bots execute and launder funds at machine speed.

This is a meaningful shift. Traditional fraud depended on human operators, manual scripting, and relatively slow iteration. AI changes the economics. It lowers the cost of persuasion, accelerates reconnaissance, automates exploit development, and compresses the timeline between compromise, theft, and laundering.

For regulators and investigators, the implication is simple: AI does not only exploit software vulnerabilities. It exploits latency — the latency of human review, rule-based AML systems, cross-border coordination, and incident response.

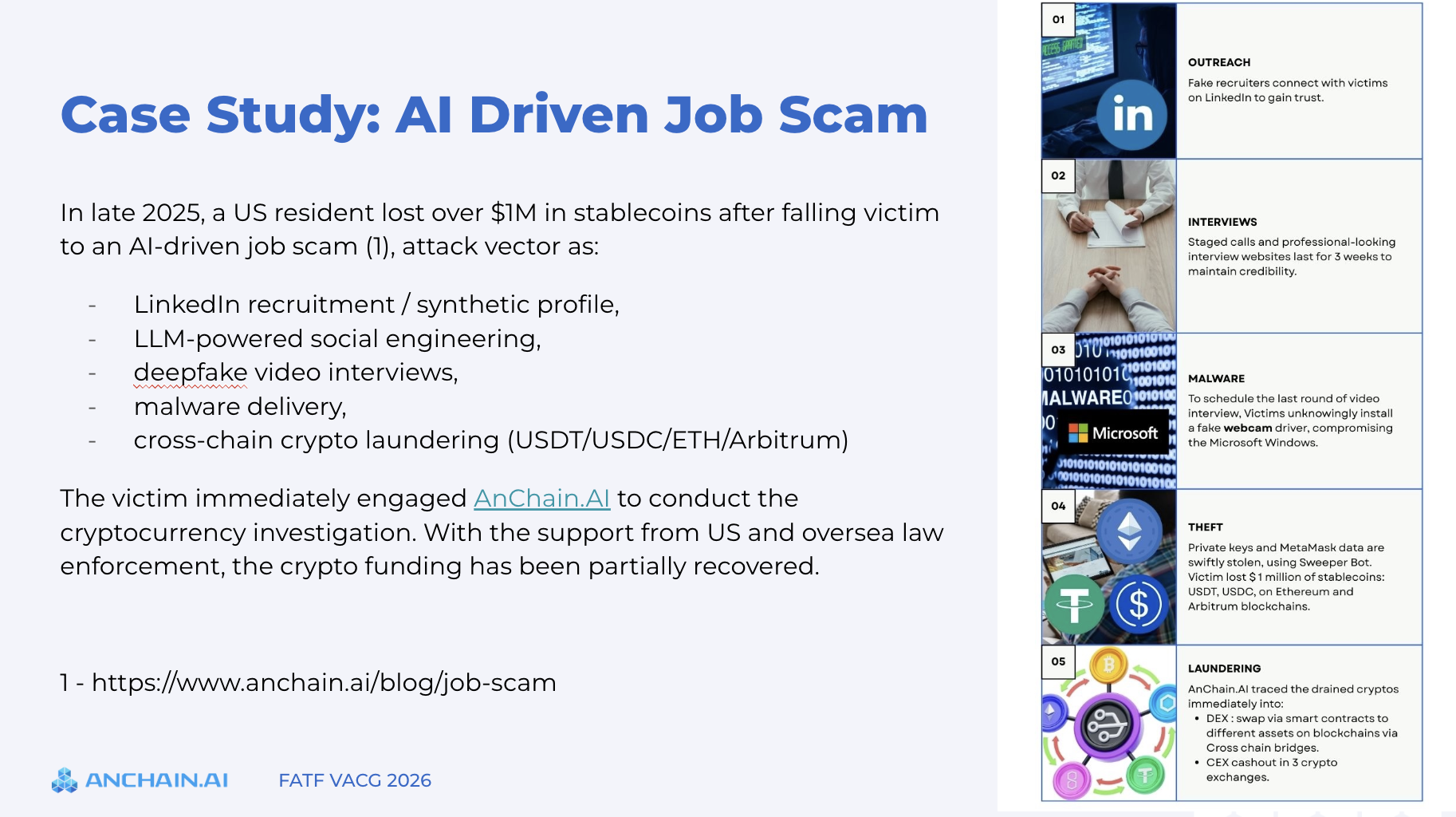

One case discussed during the FATF VACG session involved a California resident who contacted AnChain.AI after losing more than $1 million in stablecoins, primarily USDT and USDC, from a MetaMask wallet. The attack began as a sophisticated job recruitment scam. The victim was approached through LinkedIn, guided through a multi-week interview process, and eventually asked to install what appeared to be interview-related software. In reality, the software was malware designed to compromise wallet data and private keys.

The attack combined multiple AI-enabled components: fake recruiter personas, polished LLM-assisted communications, deepfake corporate identities, staged video interviews, endpoint compromise, automated wallet sweeping, and cross-chain laundering. After the compromise, funds moved from Ethereum to Arbitrum, were split across multiple addresses, swapped through DEX infrastructure, and partially routed toward off-ramp channels.

This case illustrates a broader trend: AI is attacking trust. Professional identity, video presence, language fluency, and process familiarity are all becoming programmable. For crypto professionals, VASPs, and financial institutions, the risk is no longer limited to phishing emails. The new threat model is a credible, AI-generated human workflow.

AI is also changing smart contract exploitation. AnChain.AI has supported U.S. law enforcement and industry partners on major DeFi exploit investigations since 2021, including cases such as KyberSwap and Crema. Our field experience shows that DeFi exploits often require rapid understanding of contract logic, transaction flows, liquidity mechanics, and attacker execution paths.

Recent Anthropic red-team research validates how quickly AI agents are advancing in this domain. Anthropic benchmarked AI agents against 405 real-world exploited smart contracts using SCONE-bench. Claude Opus 4.5, Claude Sonnet 4.5, and GPT-5 generated exploit paths collectively worth $4.6 million in simulated stolen funds on vulnerabilities after model knowledge cutoffs. The study also tested agents against 2,849 recently deployed contracts and found novel zero-day vulnerabilities in simulation, concluding that “profitable, real-world autonomous exploitation is technically feasible.”

This does not mean every DeFi exploit today is AI-generated. But it does show that AI copilots can reduce the expertise, time, and cost required to discover and operationalize vulnerabilities. In the hands of attackers, that creates scale. In the hands of defenders, it creates an opportunity: continuous AI-assisted review, exploit simulation, behavioral monitoring, and faster investigative reconstruction. Read more about how to build your cryptocurrency investigation AI Agent.

Frontier AI models are strengthening safety controls, which is necessary and welcome. However, the ecosystem is asymmetric. Ethical researchers and defenders may face stronger restrictions, while threat actors increasingly turn to open-weight, self-hosted, or underground models with fewer controls.

Google Threat Intelligence Group has observed adversaries moving beyond productivity use of AI and toward AI-enabled malware, guardrail bypass attempts, illicit AI tooling, and deepfake/image generation for phishing operations or KYC bypass. It also reported North Korean threat actor activity involving Gemini misuse for crypto-related reconnaissance, lure generation, and attempts to craft credential-theft instructions, alongside deepfake images and video lures targeting the cryptocurrency industry.

This creates a policy challenge: how do we preserve responsible AI research and defense while reducing abuse by unrestricted systems and criminal AI services?

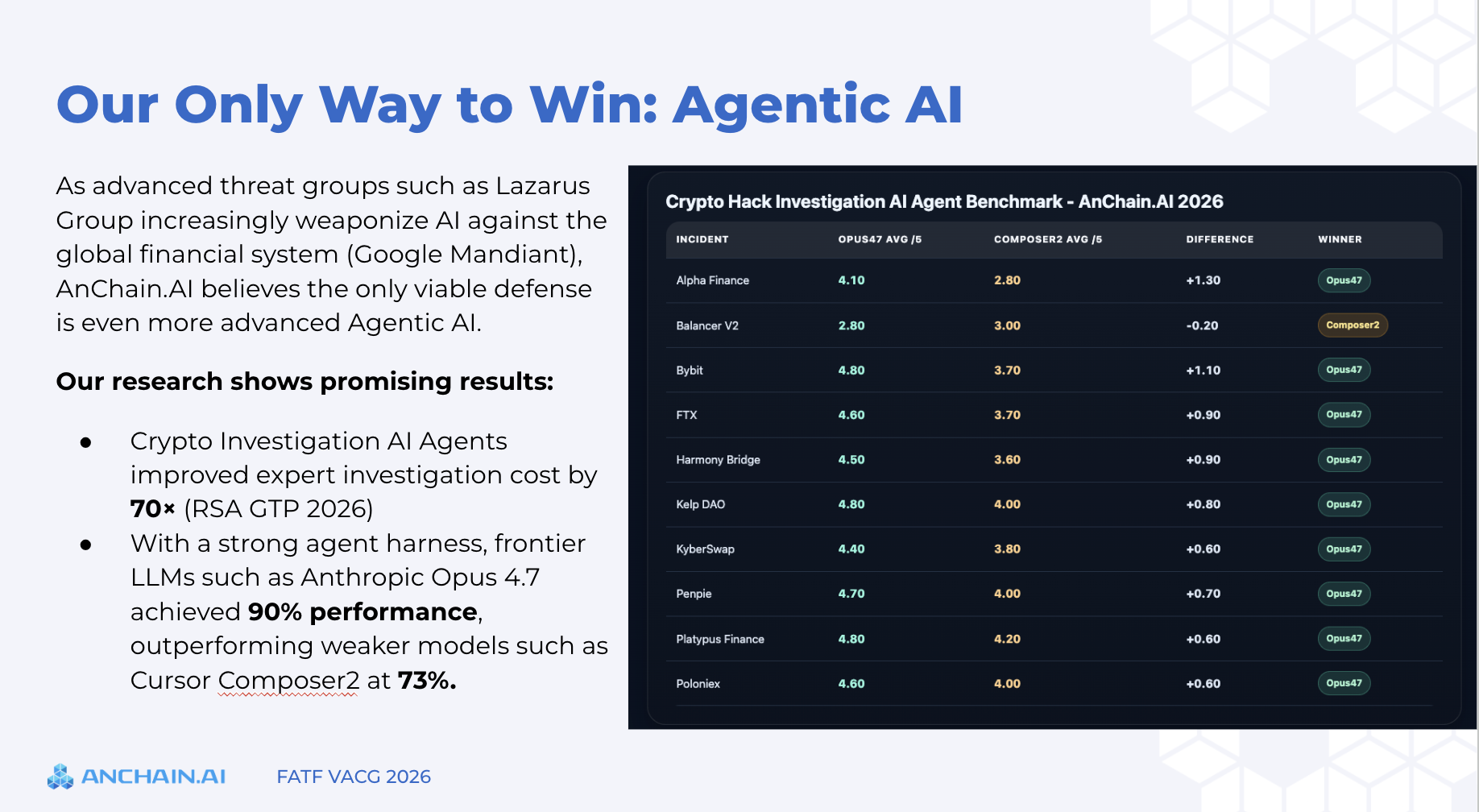

If attackers weaponize AI, defenders need more advanced AI.

At AnChain.AI, we are building AI-native crypto compliance and investigation systems that combine deterministic blockchain analytics with agentic reasoning. The goal is not to replace investigators, compliance officers, or regulators. The goal is to help them operate at machine speed, with explainability suitable for enforcement, supervision, and court-ready reporting.

AI-powered crypto fraud is insightful, scary — and already here.

The policy response should focus on capability, coordination, and speed.

1, FATF guidance should expand to address AI-enabled ML/TF/PF risks, including deepfake impersonation, synthetic identities, AI-generated phishing, autonomous laundering, and AI-assisted exploit development.

2, governments and regulators should invest in AI-native investigation capabilities. Traditional workflows cannot keep pace with machine-speed attacks. Investigators need tools that reason across chains, entities, behaviors, bridge activity, sanctions exposure, and off-ramp risk in real time.

3, international coordination must evolve around AI compute, identity integrity, and cross-border laundering. Low-cost energy regions and GPU data center hubs may become strategically important as AI-enabled crime scales globally.

To assess your institution’s exposure to AI-enabled crypto crime, schedule a call with AnChain.AI’s crypto AML, compliance, and investigation experts here: https://www.anchain.ai/demo